AI is reshaping the software development process. From Karpathy’s concept of “Vibe Coding,” we can foresee fundamental changes in collaboration, tools, and thought processes in future product development. This article helps you understand the impact of this technological transformation on product managers and how to prepare for it.

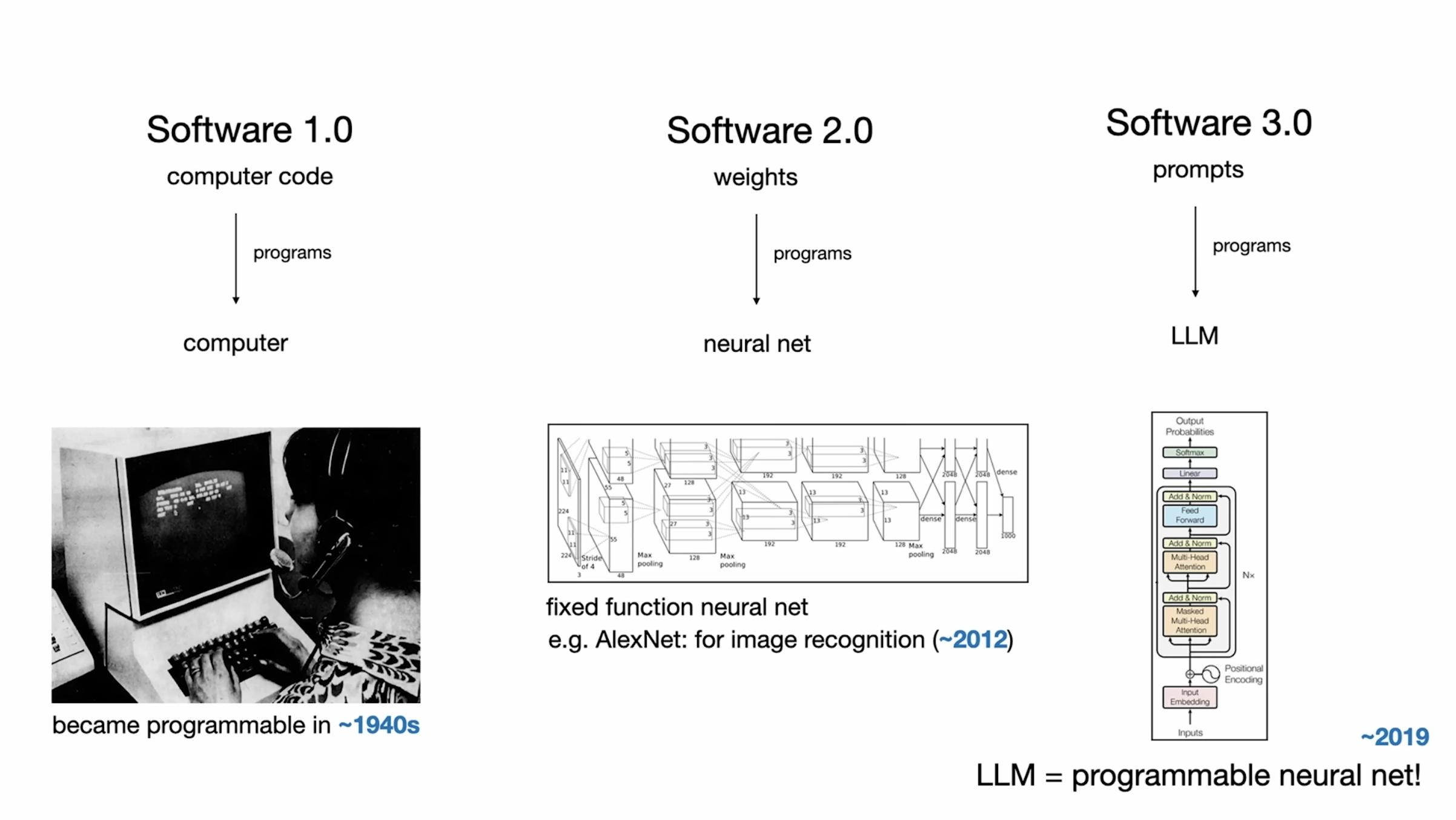

Karpathy believes software is undergoing a third major paradigm shift:

Karpathy believes software is undergoing a third major paradigm shift:

- Software 1.0 (human-written logic),

- Software 2.0 (neural networks learning from data),

- Software 3.0 (programming with natural language).

This means everyone can be a programmer, and “vibe coding” is becoming a reality.

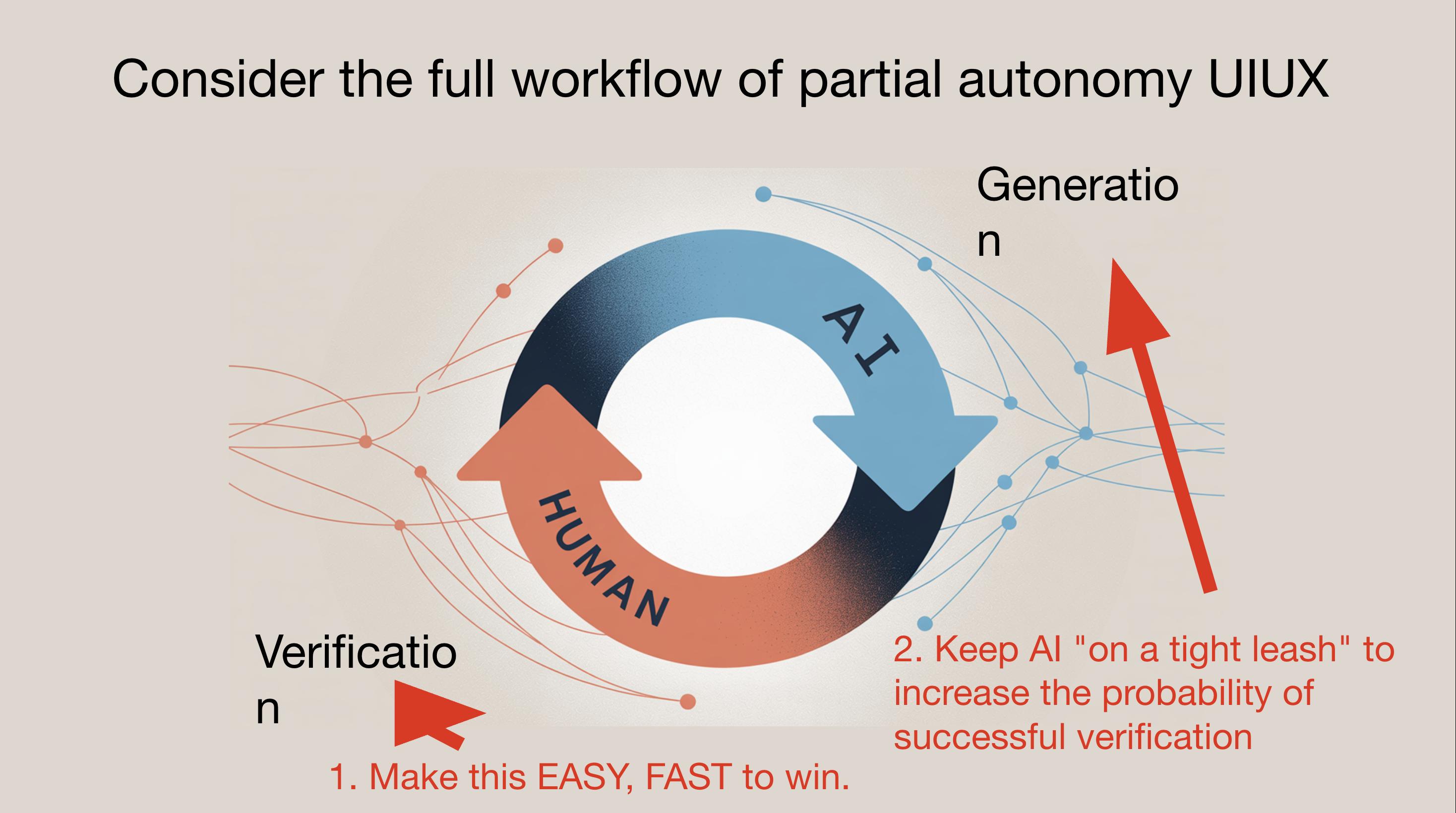

LLM agents must be managed on-the-leash, verifying reliability at low autonomy levels before gradually loosening permissions. This approach prevents ‘over-reactive agents’ from causing uncontrollable risks and maintains a rapid Generation ↔ Verification cycle.

Software Has Changed Again: 1.0 → 2.0 → 3.0

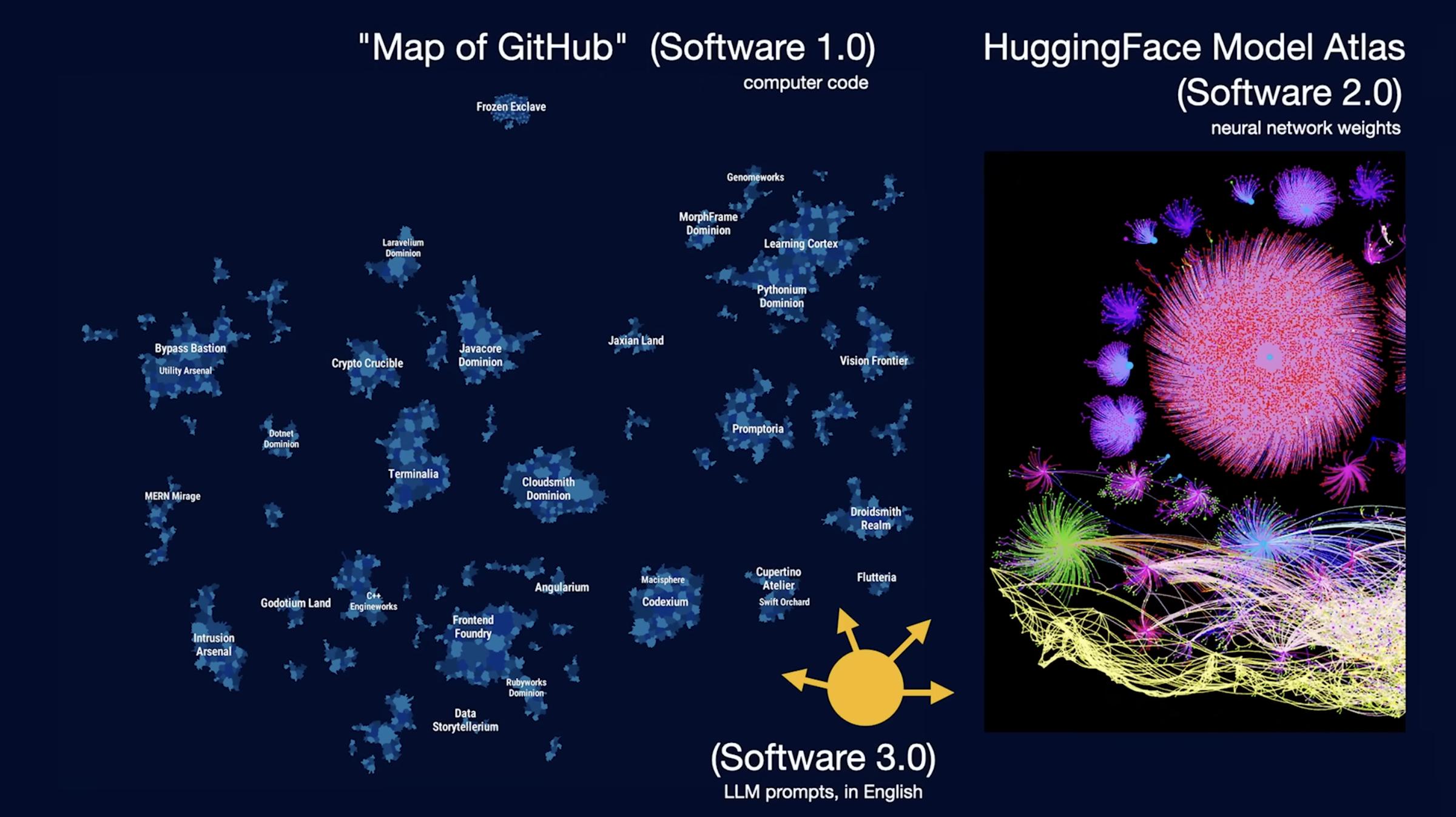

- Software 1.0: Purely human-written instructions.

- Software 2.0: Data + optimizers produce weights, where “weights are code.”

- Software 3.0: Prompts are programs, with LLMs acting as programmable computers; English has become the “main programming language.”

“We’re now programming computers in English.”

Software 1.0 — Traditional Explicit Code

- Operating System Kernels: Linux Kernel, Windows NT, etc. All written by human engineers in C/C++.

- Classic Backend/Frontend Frameworks: Django, Spring, React, Vue, etc. Frameworks and business logic are written in source code hosted on GitHub.

- Game Engine Scripts: Unity C# scripts, Unreal C++ modules, where gameplay and rules are implemented line by line by developers.

Characteristics: Logic is deterministic, readable, and statically analyzable. The main products are text source files like .c, .cpp, .py, .js, etc.

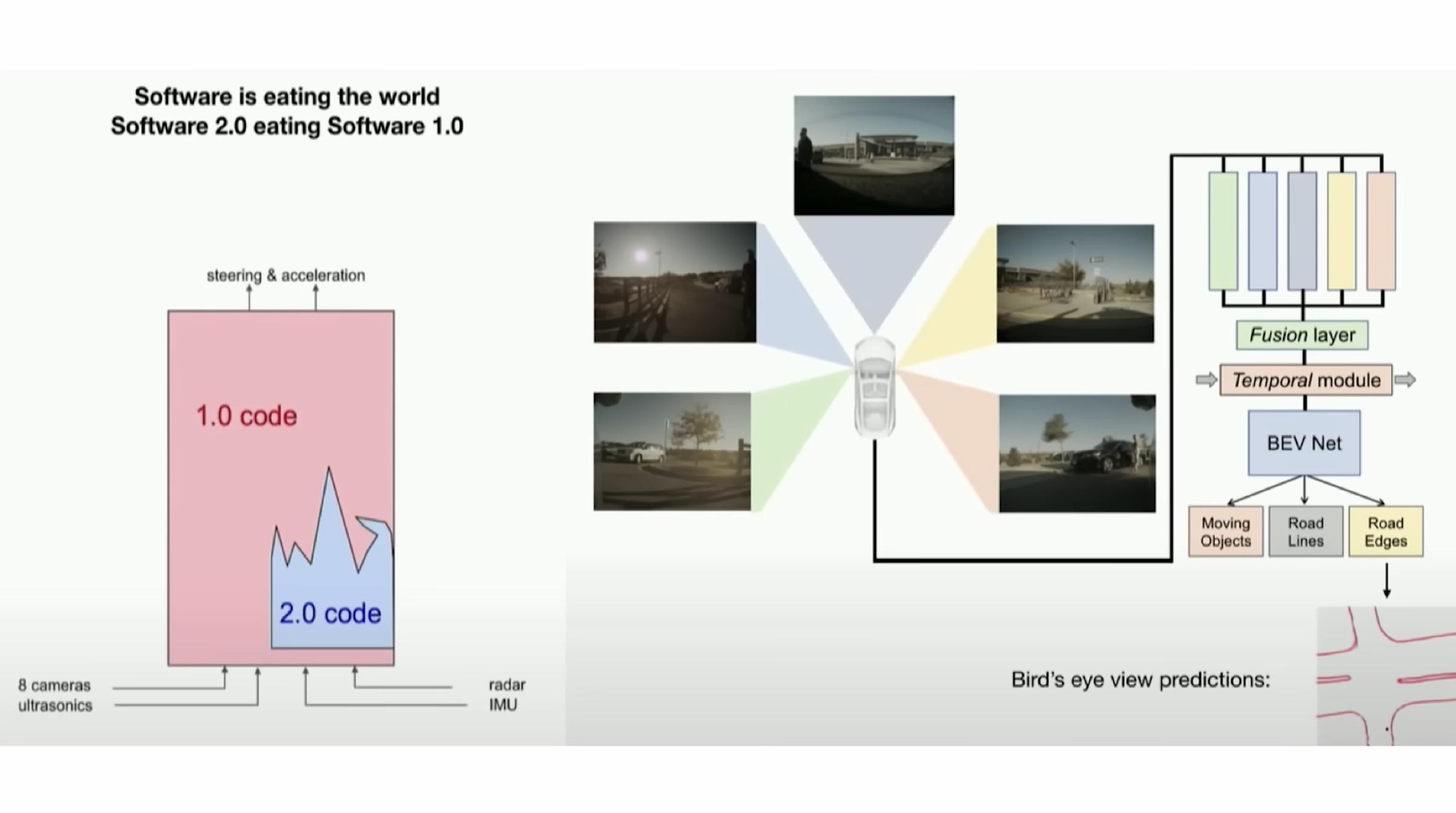

Software 2.0 — Trained Weights as Code

- Deep Learning Models: AlexNet, ResNet, YOLO, Stable Diffusion—network structures are written by humans, but the actual tasks are executed by billions of floating-point weights.

- Hugging Face Model Hub: Contains pytorch_model.bin/safetensors weight files, typical “code units” of Software 2.0.

- Autonomous Driving Perception Stack: Tesla’s early Autopilot visual recognition network: camera frames → detection/segmentation results, with weights trained from large-scale labeled data.

Characteristics: The focus of development activities shifts from “writing rules” to “preparing data + training + tuning parameters.” The product is weight files, which humans can hardly read or modify directly.

Software 3.0 — Writing Programs with Natural Language + Toolchains

- LLM APIs like ChatGPT/Claude/Gemini: Prompts are programs, calling interfaces executes them; composite calls + tool use form “software.”

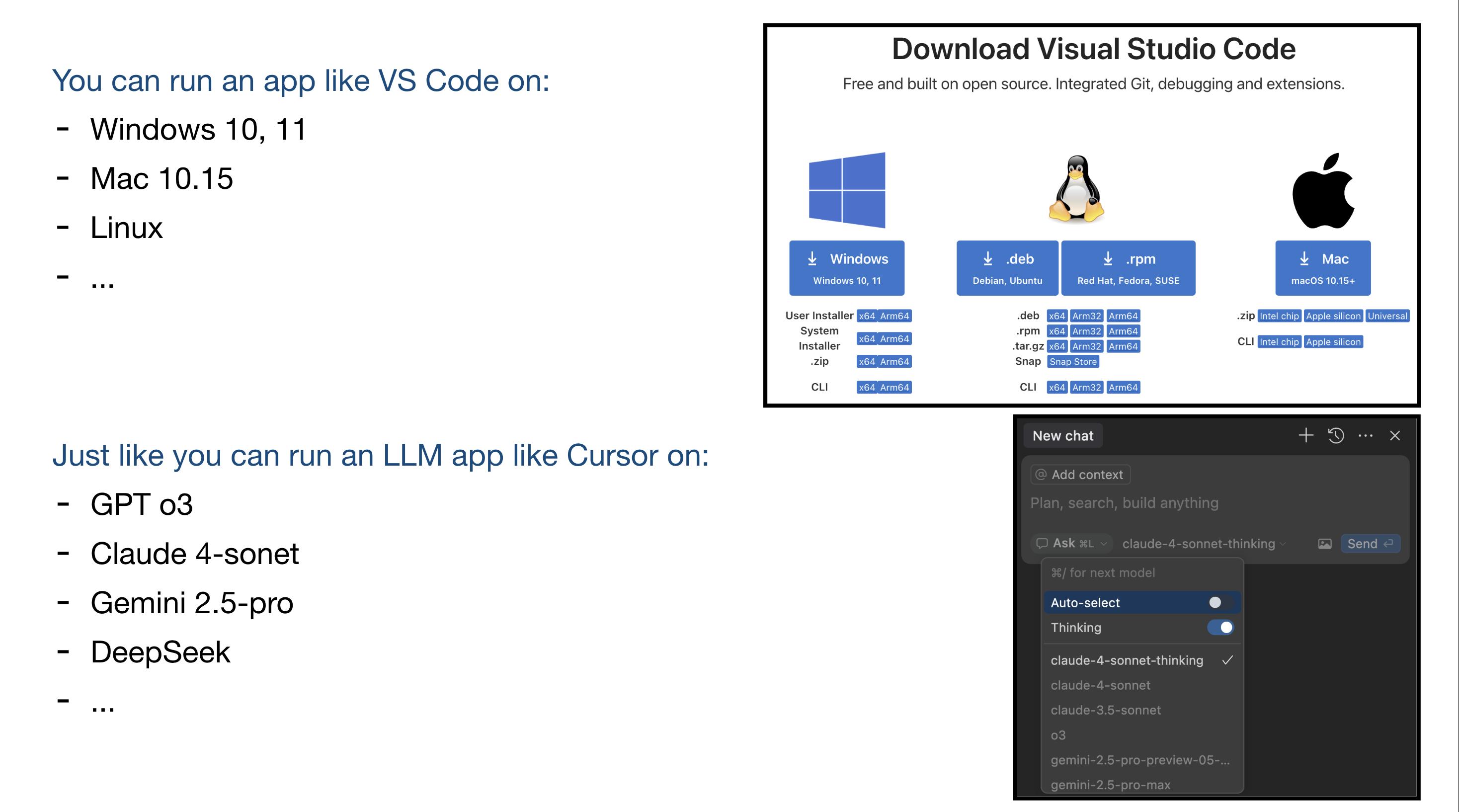

- AI Programming IDEs (Cursor, Devon, GitHub Copilot Chat): Users converse in English/Chinese, allowing LLMs to generate, modify, and explain code in local repositories; the Autonomy Slider determines the depth of automation.

- No-Code Agent Platforms: Such as LangChain Agents, OpenAI Function Calling + external tools, where users describe intentions using YAML/JSON, and LLMs handle decision-making and calls.

Characteristics: “Source code” transforms into prompts + configuration files + a series of tool calls. LLMs possess reasoning and planning abilities, enabling new behaviors at runtime; humans mainly focus on constraints and verification (on-the-leash).

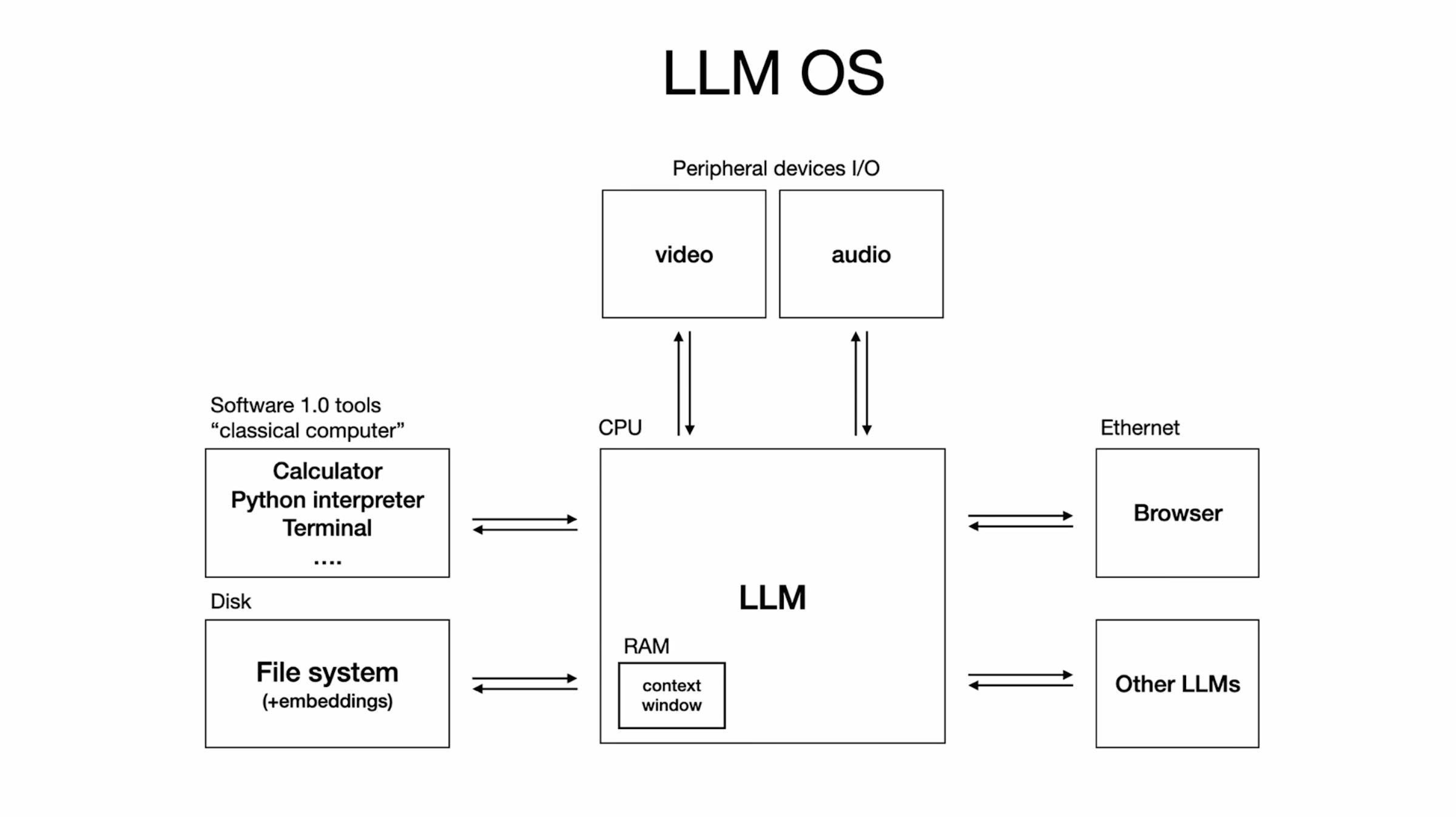

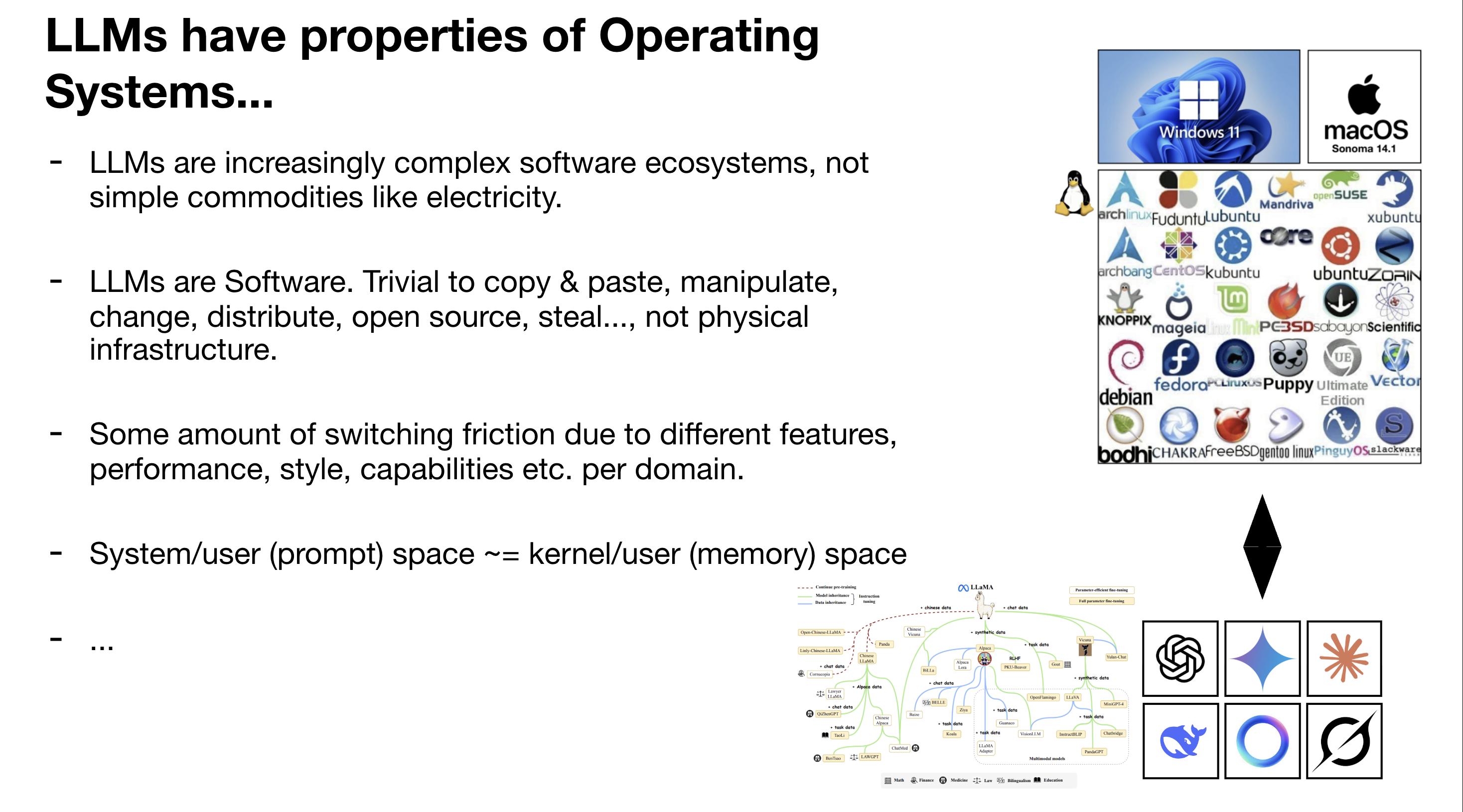

LLM as a Computer/Operating System Analogy

Karpathy uses multiple analogies to position LLMs: like a “utility” providing pay-per-use intelligence services; like an “operating system (OS)” with a continuously evolving complex ecosystem; and reminiscent of the mainframe era of the 60s, where we interact through “terminals” (chat boxes).

These analogies emphasize that LLMs are not just simple APIs but programmable new computers with memory, tools, and orchestration capabilities, with GUI forms still in early exploration.

LLM’s Psychology and Programming Language: English

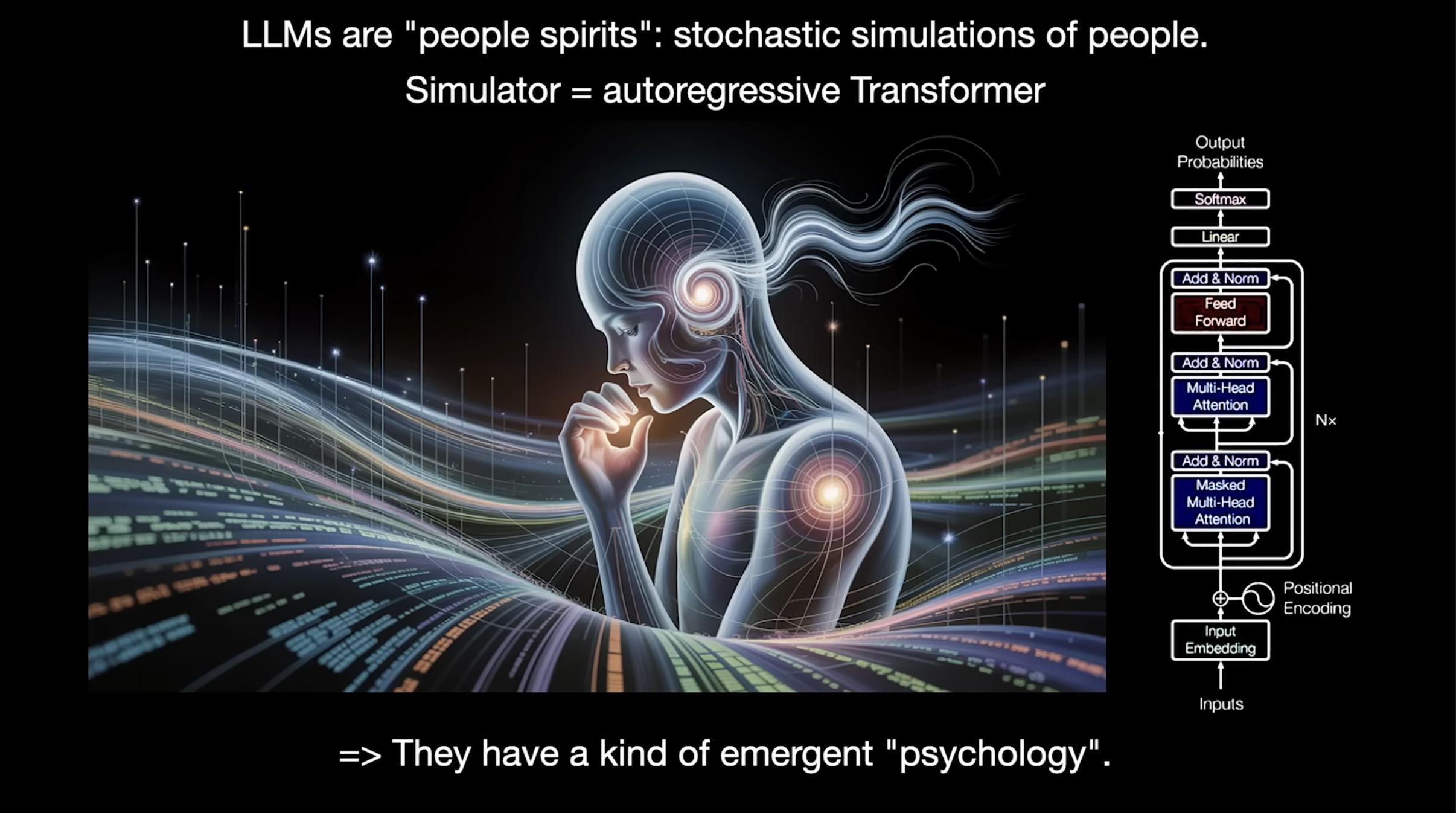

Karpathy likens LLMs to “stochastic little spirits,” formed by autoregressive transformers fitting vast amounts of text, exhibiting anthropomorphic cognitive traits: broad but forgetful, capable of reasoning yet prone to ‘hallucinations.’

- Jagged Intelligence: Extremely strong in certain tasks but may err in basic logic (e.g., comparing 9.11 with 9.9).

- Anterograde Amnesia: Cannot continue learning after training, lacking long-term memory.

- Hallucinations: Fabricating false facts.

- Prompt Injection: Easily deceived by malicious instructions.

These flaws mean LLMs cannot be left to operate autonomously; human supervision and constraint mechanisms must be established.

Thus, English becomes the new programming language—writing high-quality, executable natural language instructions is a core skill of Software 3.0.

From Vibe Coding to Practical Challenges

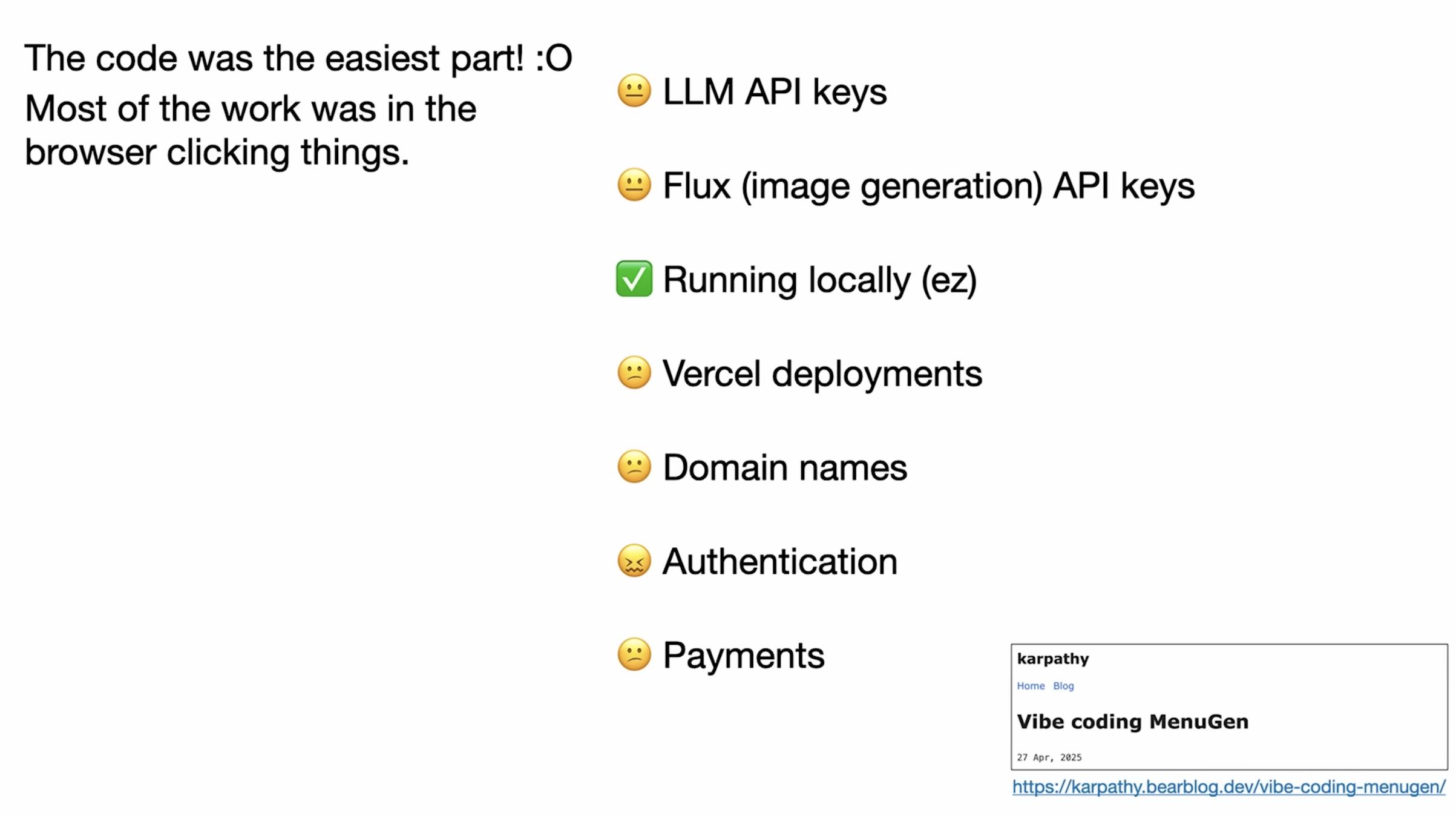

In his talk, Karpathy demonstrated quickly prototyping through dialogue (“I say what I want, and it writes the code; I then run/improve it”).

- The Easy Part: Quickly creating a “working demo” with LLMs.

- The Hard Part: Making it stable, maintainable, and deployable—this is the gap he repeatedly mentions: a demo is works.any, but a product must work for all users, scenarios, and inputs consistently.

Partially Autonomous Products: Best Practices for Human-Machine Collaboration

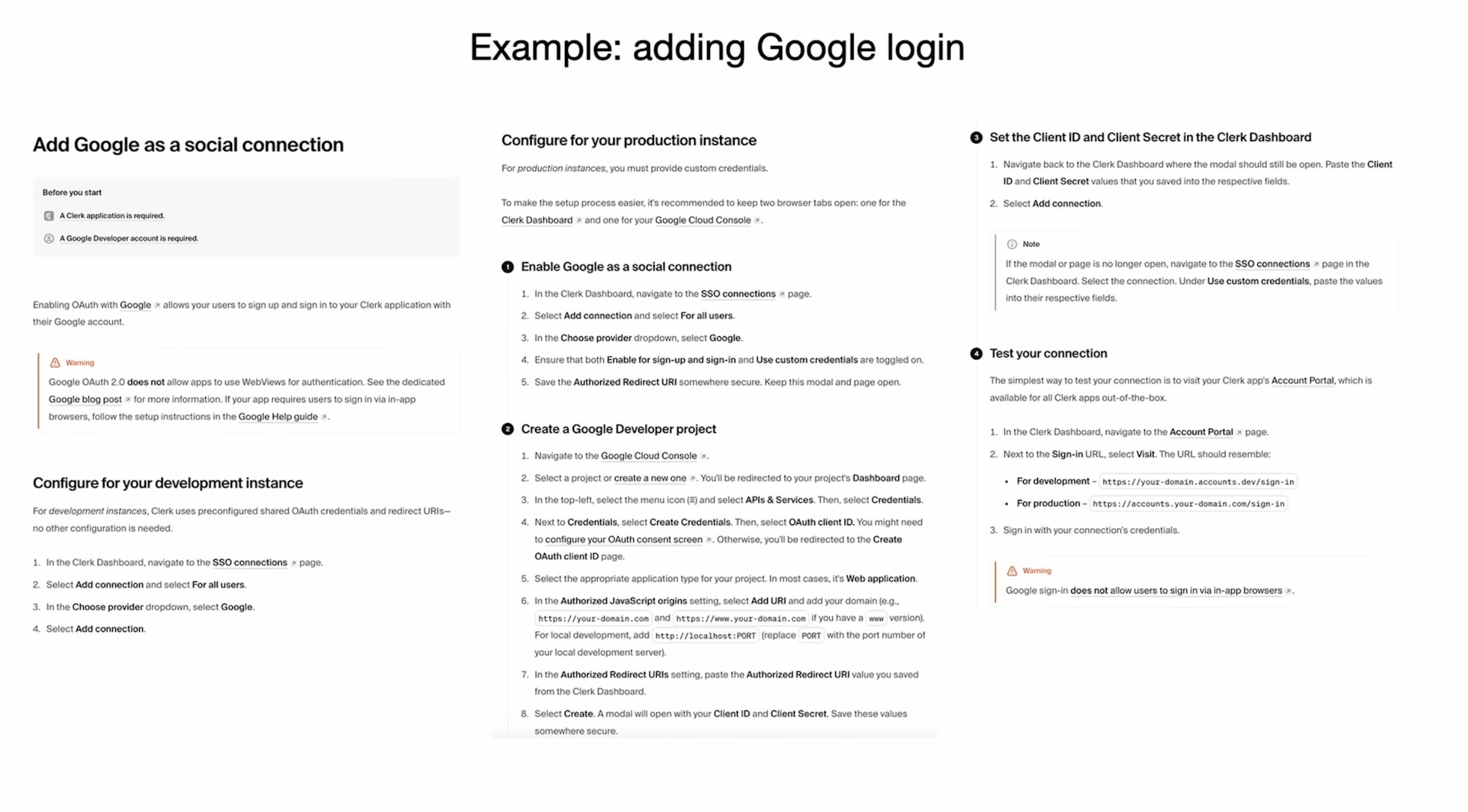

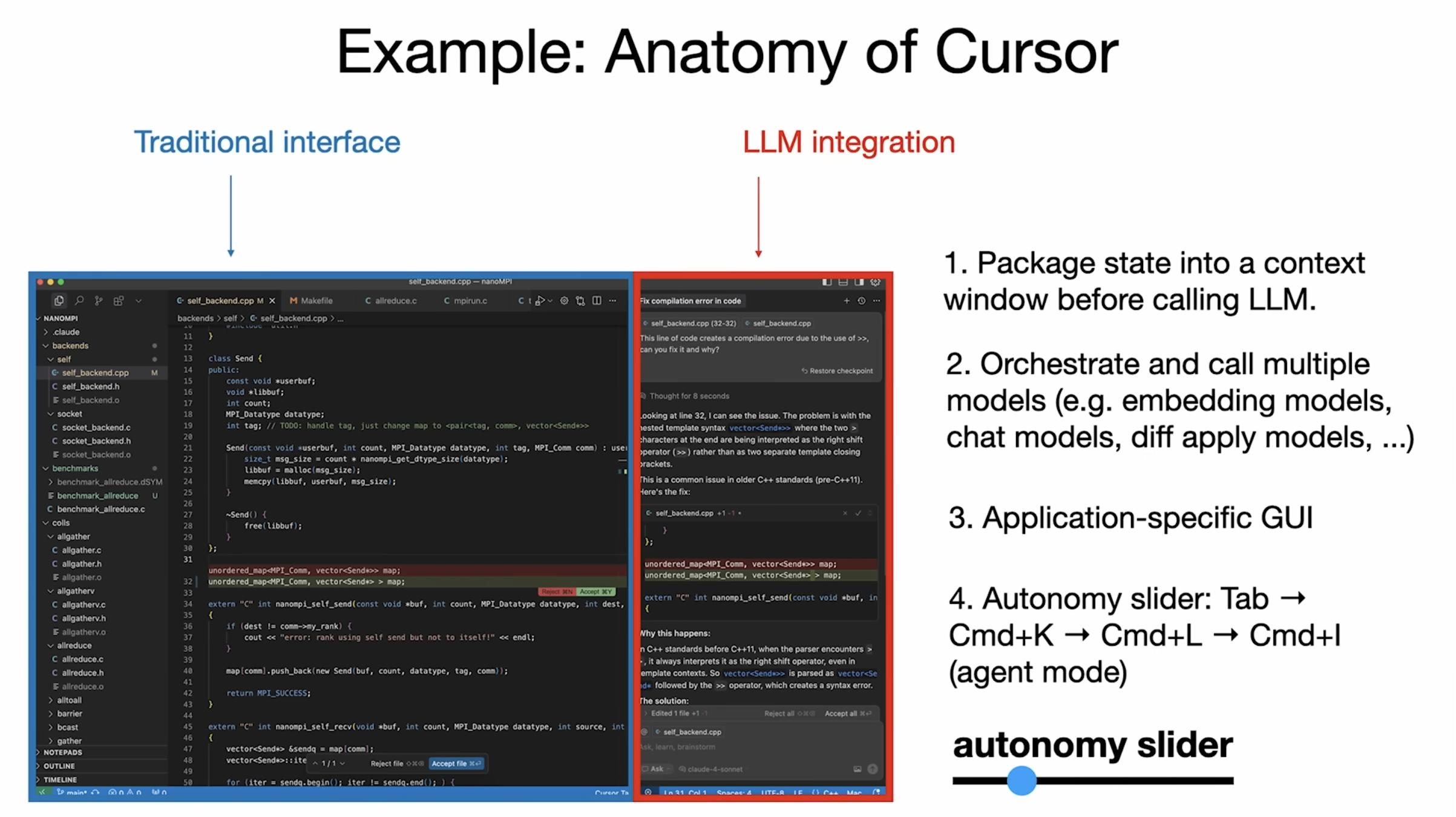

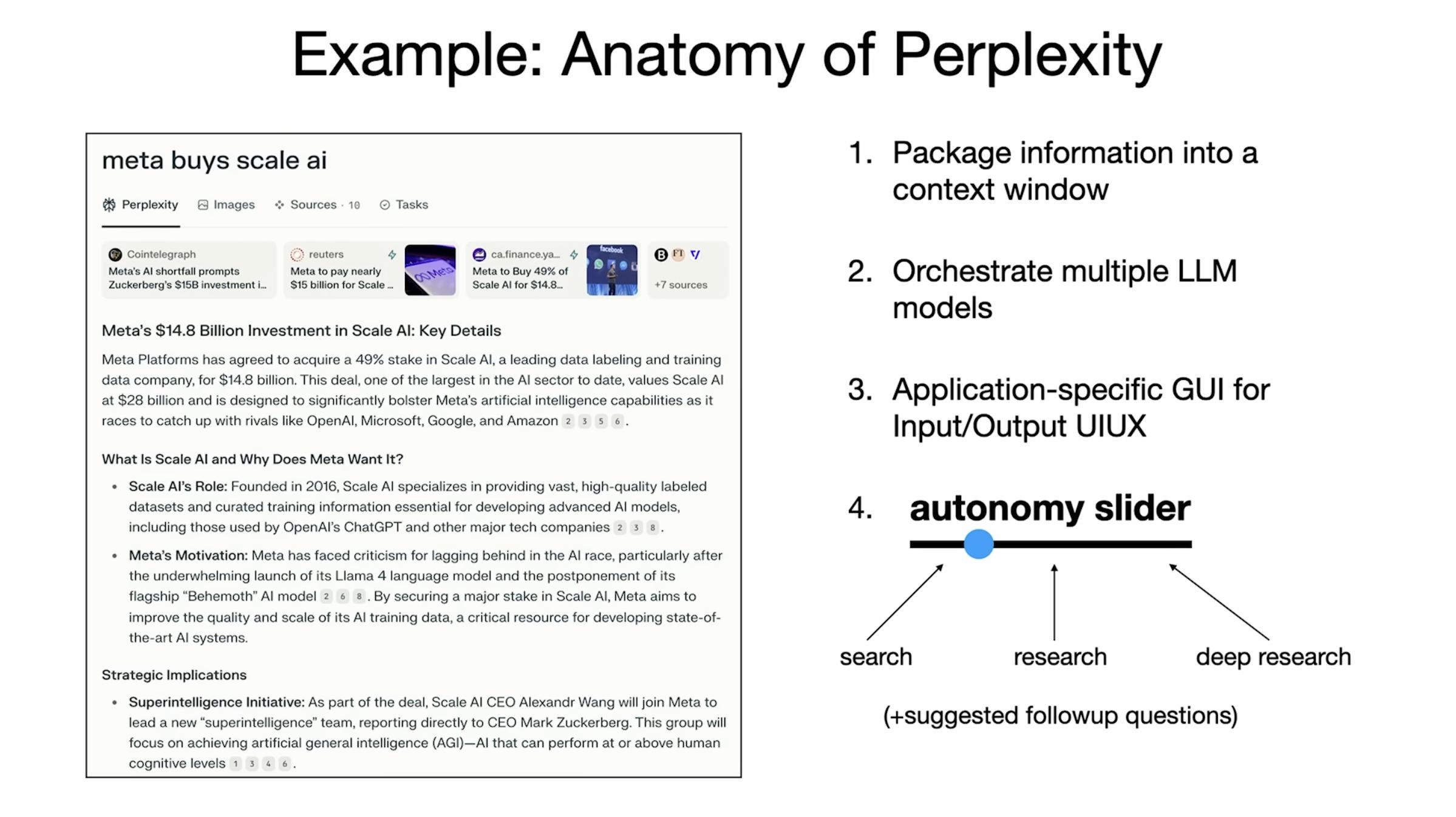

This section is the core of the product methodology, where Karpathy uses Cursor (AI IDE) and Perplexity (AI Search) as examples:

Common Patterns

- LLMs manage context and multi-turn calls, while GUIs allow humans to review and roll back at minimal cost.

- Products are built around a rapid closed loop of “Generation ↔ Verification”: LLMs provide drafts/diffs/references, and humans quickly review, revert, and iterate.

The design goal is to reduce verification costs (e.g., diff views, color highlights, grouped changes, one-click undo).

Autonomy Slider

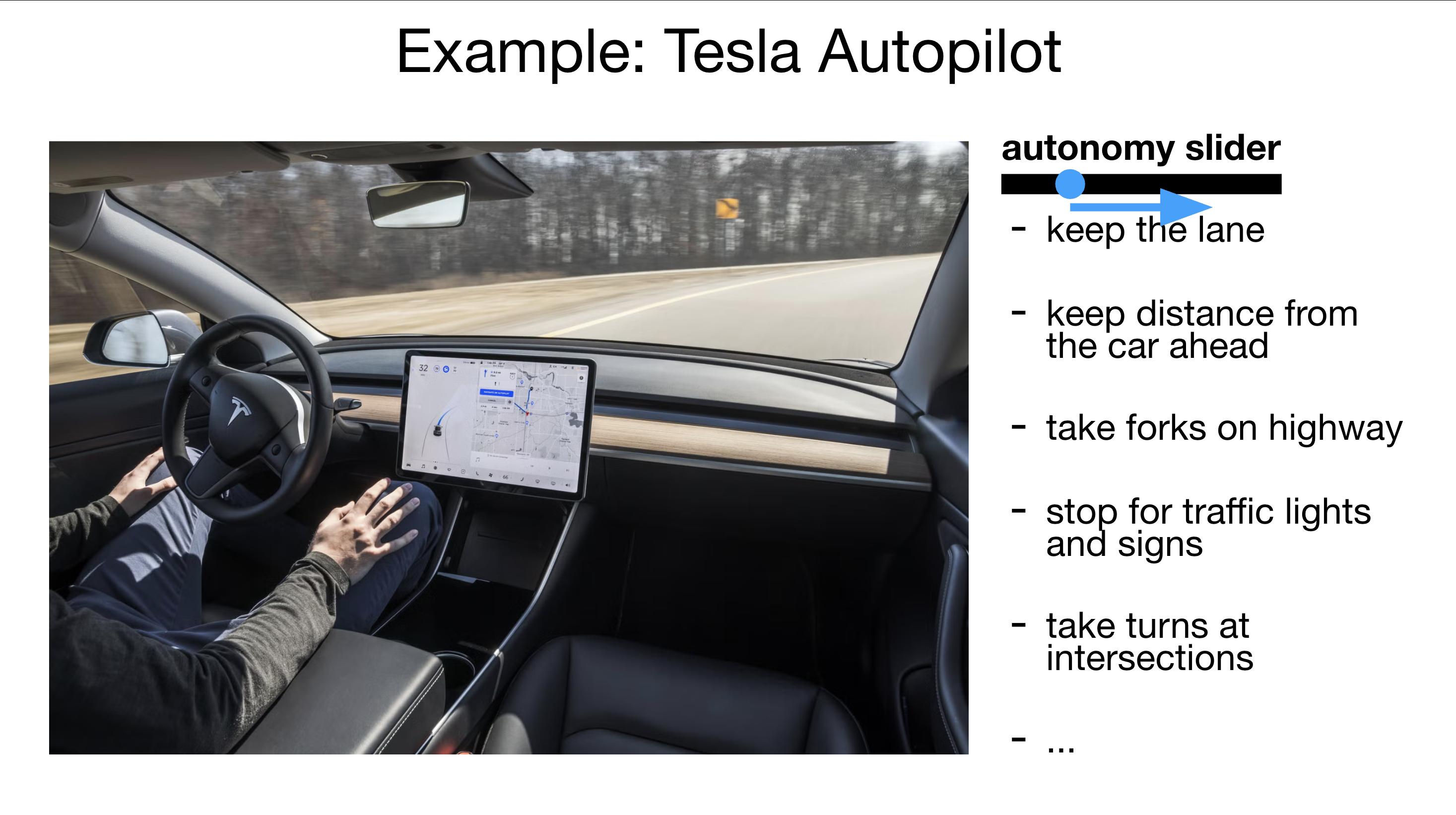

- Cursor progresses from “tap completion → modifying a chunk → changing an entire file → freely editing an entire repository,” allowing users to control granularity and authorization boundaries at any time.

- Perplexity’s “Quicksearch → Research → Deep research” also reflects gradual delegation: from quick answers to comprehensive searches/citations, and then to deeper research processes, with manual interruption and verification possible at each level.

- Essence: Start with assistance, then enhancement, and finally potentially full automation, unlocking step by step.

Limiting Over-Excited Agents

- Avoid generating massive changes all at once; instead, encourage small, controllable proposals. Keep the AI “on a short leash” to maintain human dominance and gatekeeping.

- The GUI minimizes review costs (diff, color, batch/individual, one-click undo), and the faster the loop, the smaller the errors.

Autopilot Analogy

Karpathy points out that Tesla’s Autopilot experience is about “getting partial autonomy right first”: starting with auxiliary driving features like lane keeping/adaptive cruise control, gradually progressing to higher capabilities (e.g., automatic lane changes, parking, summon features, complex driving tasks, and city road autonomous driving (FSD Beta)).

Software 3.0 products should evolve similarly: assistance → enhancement → high autonomy/full autonomy, rather than achieving it all at once.

Upgrading Systems for Agents

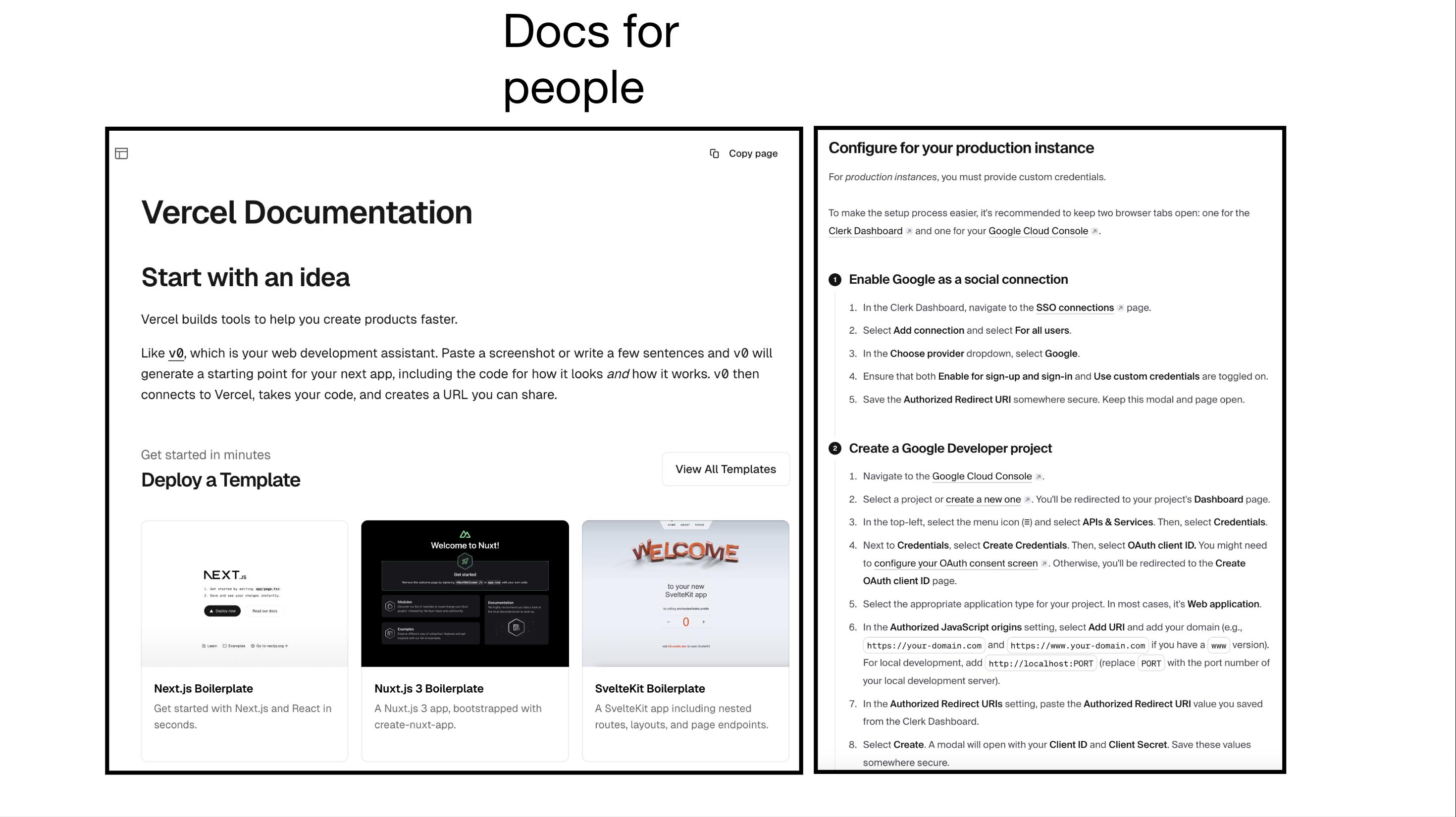

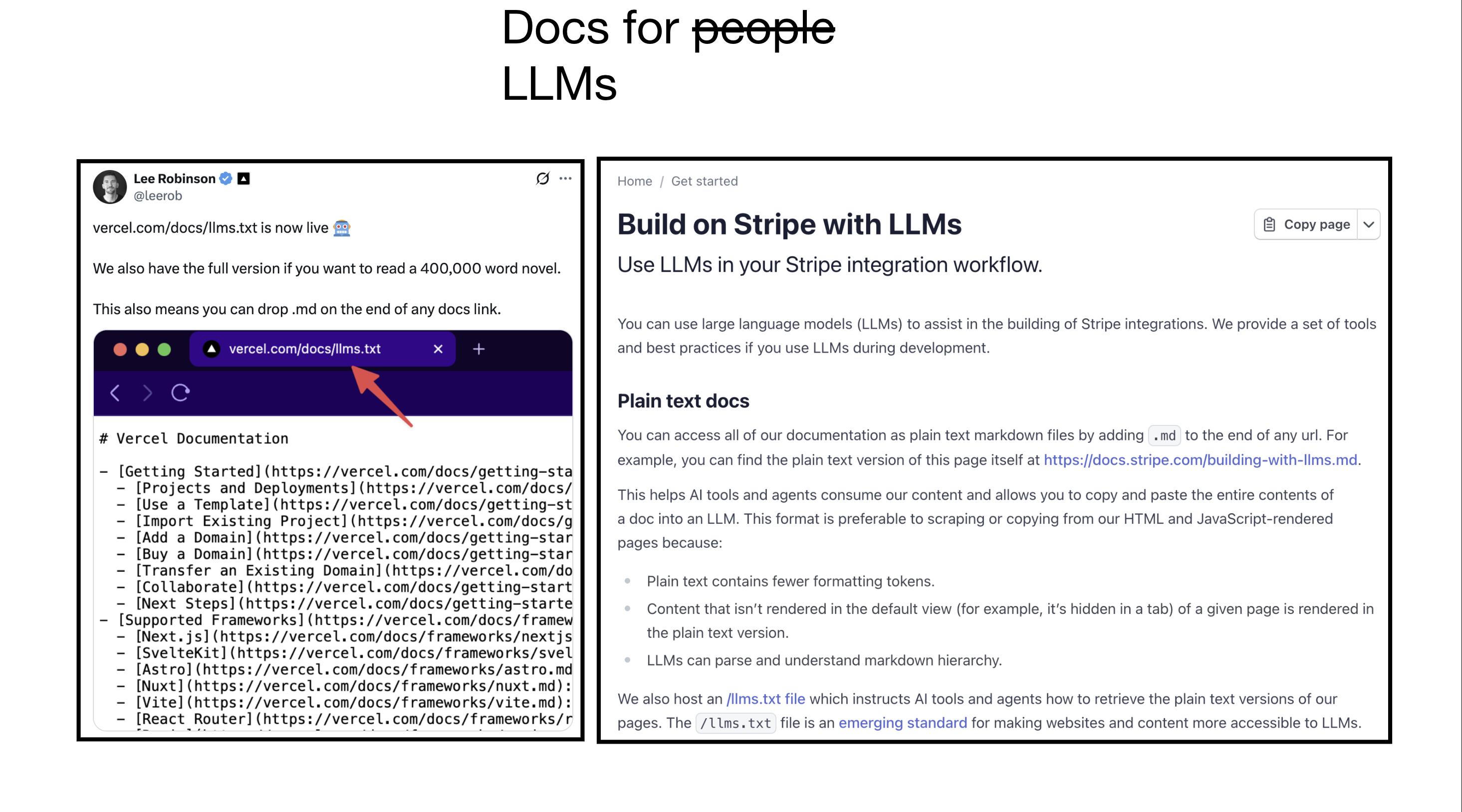

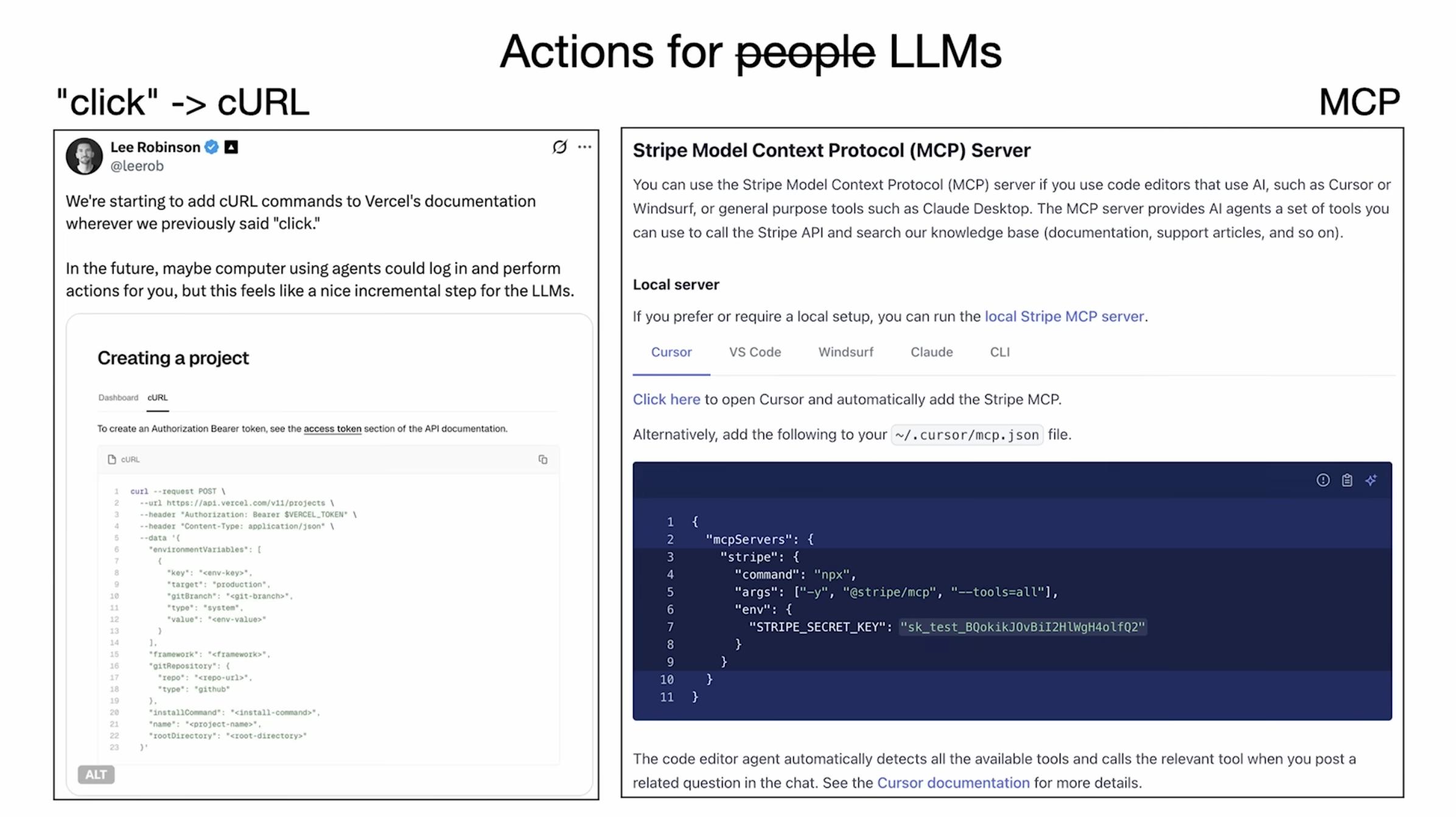

Documentation and Interfaces: Write system documentation for LLMs (not just for humans), providing llms.txt, structured/Markdown-friendly interface descriptions, deterministic calling conventions, and clear input/output examples.

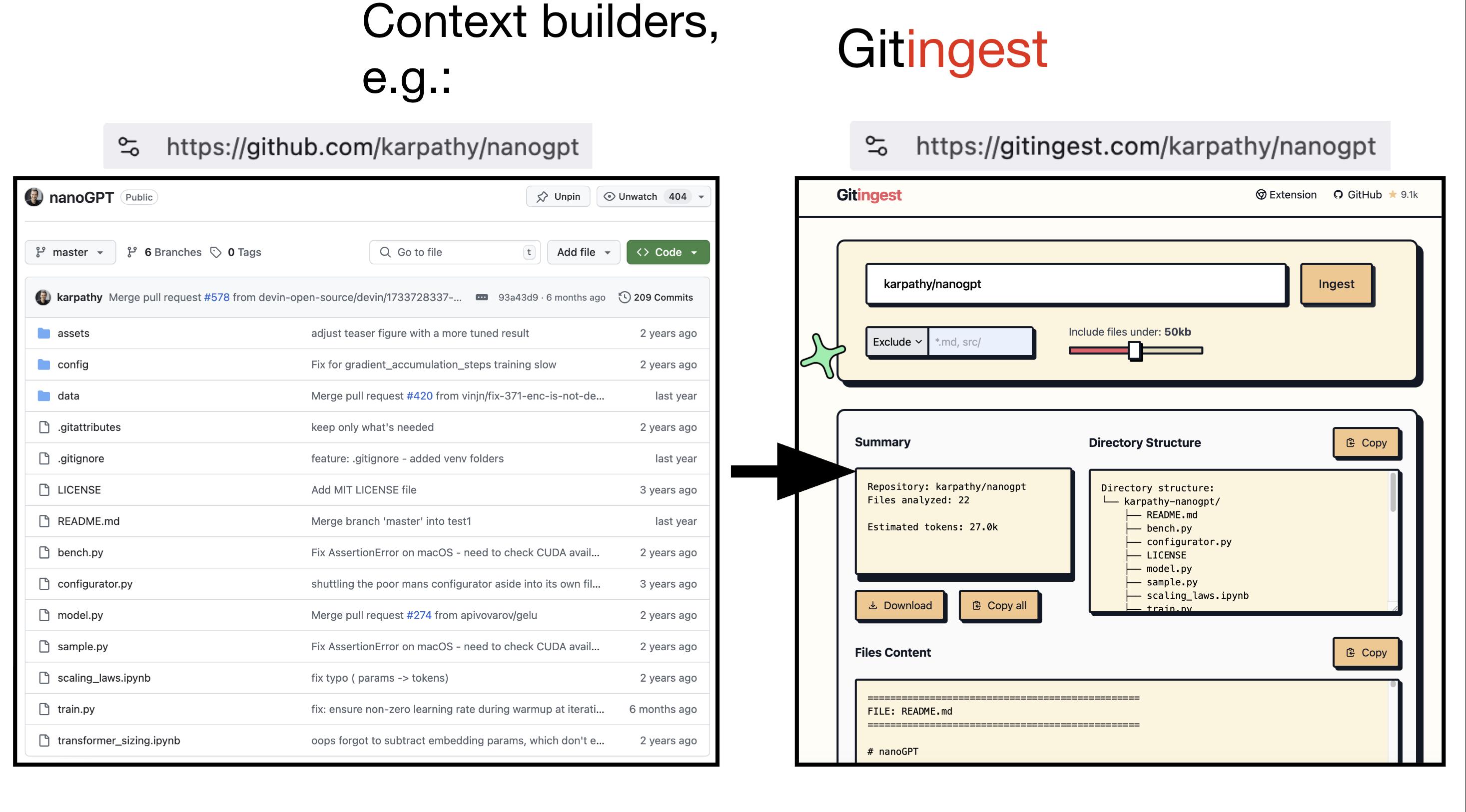

Protocols and Context Pipelines: Adopt more standardized tool protocols (like the MCP concept he mentioned) and context builders (e.g., tools that feed codebases/knowledge bases to agents), reducing the cost of agents exploring in the dark.

Education and Expanding Access: Everyone Can “Program in English”

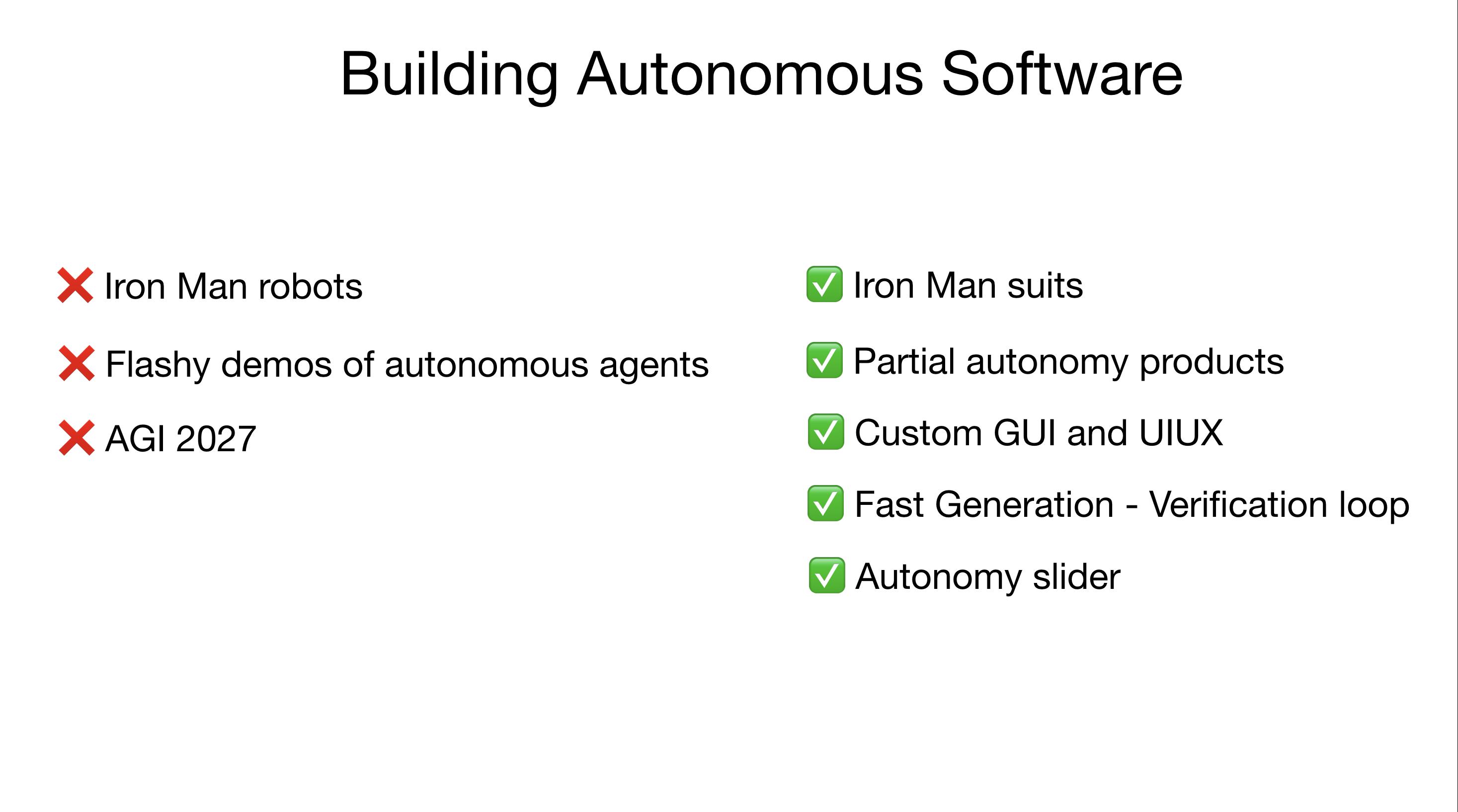

LLMs can act as both Suit (enhancement) and Robot (full agent); in the short term, the former is more promising.

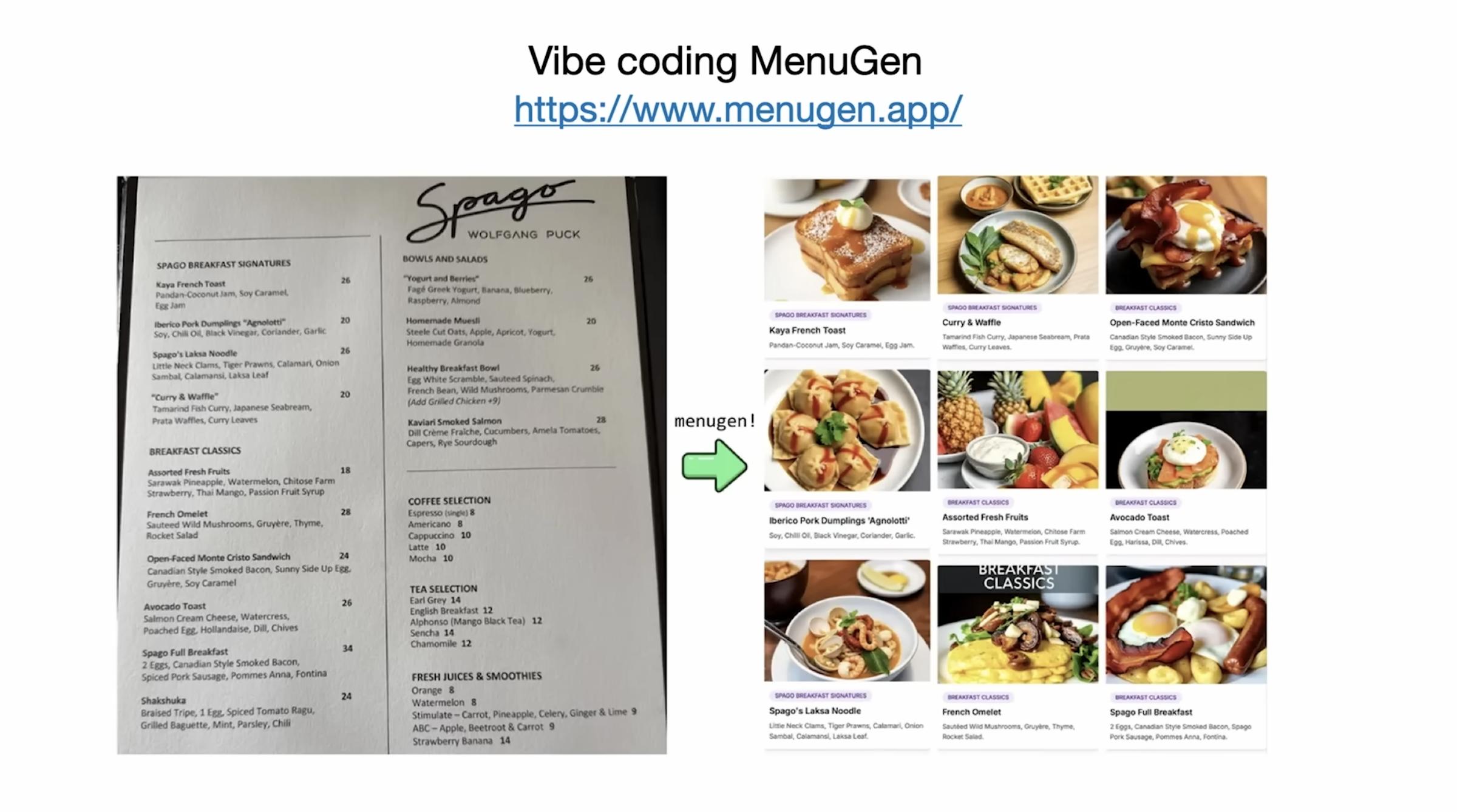

Using the viral tweet about “Vibe Coding” as an example:

- One can create an iOS app in a day without knowing Swift.

- Created MenuGen (which generates dish images from menus) in just a few hours of coding; the real time-consuming part was DevOps (logging in, payment, deployment).

Children’s Vibe Coding videos reinforce his belief that natural language programming is “gateway drug,” unlocking a vast new demographic.

Conclusion: The Agent Era on a Decade Scale

Karpathy uses the metaphor of Iron Man’s suit: LLMs are amplifiers of human capabilities; however, the real transformation won’t happen in a year or two but resembles a decade-long evolution. We need to design transitional forms of “partial autonomy” at the product level, using rapid generation ↔ verification to tame it into reliability and control.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.